Months ago I was chatting with a colleague about a recent data leak (a.k.a. Data breach), as we tend to do in this industry. Those terms are defined by Microsoft as “an unauthorized disclosure of sensitive, confidential, or personal information from an organization’s systems or networks to an external party“. Any time I see an article about data breaches I have flashbacks, and fortunately not too much PTSD, as seen via the next few paragraphs.

My Brief History

It’s been ages but back in the day, I started a data breach tracking project on attrition.org called the DLDOS: Data Loss Database – Open Source). While it started in June, 2005, the idea actually went back to 2001. We were busy working on the defacement mirror among other projects so it took a while to get around to.

Within a month or two, Lyger took over that project and ran with it. Three years later, in 2008, we made the decision to transfer the project to the Open Security Foundation (OSF) to help ensure its longevity where it was renamed to DatalossDB. That was the right decision as the project is still around after two more major transitions. From there, the Open Security Foundation folded in favor of taking that project and the Open Sourced Vulnerability Database (OSVDB) private as we simply were not getting support, only people using our data for commercial purposes in violation of our license.

The database was renamed to Cyber Risk Analytics and managed by Risk Based Security (RBS) for eleven years. From there RBS was acquired by Flashpoint who continued the database under the same name. Since then that name and branding has slowly been diluted as the data has become another component of their overall Ignite offering.

The point of this history is to illustrate that I am familiar with data breaches. So when I read about a new one I am always interested in some capacity due to that history, and, as best I know, creating the concept of tracking them. I speak with a little authority on this matter, but here comes the twist.

Backstory

The data breach and subsequent leak that caught my and my colleagues’ eyes this time was about the U.S. Immigration and Customs Enforcement (ICE) agents’ personal details. Part of this saga was covered by Ariana Baio writing for the Independent. In short:

Department of Homeland Security whistleblower provided a dataset of approximately 4,500 immigration personnel after the shooting of 37-year-old Renee Good in Minneapolis.

This data somehow ended up in the hands of Dominick Skinner who was going to post it via a dedicated website called the ICE List. Before that could happen, a large-scale distributed denial of service (DDoS) attack occurred, tracked back to Russia apparently. We’ll skip over the question of why Russia would be interested in helping protect ICE and the U.S. government as that gets political and disheartening very fast. From the article:

Dominick Skinner, a Netherlands-based immigration activist, told The Daily Beast that his website, ICE List, came under cyberattack Tuesday evening after the publication reported Skinner planned to release personal information, obtained through a whistleblower, about thousands of employees.

[..]

The attack, Skinner said, occurred as he prepared to publicly identify the names of immigration law enforcement officers that were obtained in a dataset from the whistleblower.

Questions

An unexpected recurring pattern is that when criminals take the time to break into a site, move laterally, compromise more and more systems, and ultimately find the digital crown jewels so to speak, but then they leak them in a manner that virtually no one ever sees. Oftentimes we only have news articles about it because a journalist or three were fast enough to verify the leak and data before it vanishes. Why does this happen at all, let alone so frequently?

Why wouldn’t the data be public if the criminals took that much time to acquire and then leak it? In a way, regardless of their hacking skills, if the data they leaked isn’t available 15 minutes afterwards they are amateurs in one regard. That’s because part of leaking information to the public should mean actually making it available to the public. I am going to give some very general thoughts on why many leaks repeat this pattern as well as explain to readers why some leaks have staying power while others do not. I do not condone or encourage the hacking and leaking of data.

For example, earlier in the day the article was posted, ICE List was responding somewhat sporadically, but clearly having problems staying online. The night before it was completely offline, presumably when the DDoS started or had the most hosts active in the attack. So, why wasn’t the leaked data more readily available; beyond the DDoS attack which came much, much later in the entire leak and disclosure process.

There are many hundreds, if not thousands of examples of this same thing be it data leaks, web site defacements, and other instances where published data or activity runs the risk of disappearing quickly. I’ll start by stating a few simple facts that are relevant for this topic. First, the data that was taken by the criminals from ICE and given to this activist happened before publication (of course). I don’t know how much time elapsed between compromise, data exfiltration, hand-off to hacktivist, and publication. Second, the activist made the decision to publicly leak the data via a dedicated web site. That means he had enough time to register the domain, arrange for hosting of the site / data, and create the design for the site.

That is often a significant amount of time in the fast paced world of leaking data. Someone like Skinner, who receives the data and is presumably in control of how fast they would like to publish it. Waiting a few hours or even a day or two was therefore a choice. I point that out because it is important for this discussion. Unless the person publishing the data has their hands “tied” and would only get the data if they disclosed it in a specific timeframe, they would have time to ensure the data would be more readily available.

We’ll start with an information technology (IT) concept called “single point of failure” (SPOF). This is something that applies to anyone, in any circumstance, facing a DDoS attack or are at risk of a data outage. In old layperson terms it basically means “all your eggs in one basket“. Hosting data in one place makes a DDoS attack all the more effective as the offending party has to focus efforts on that one place. If data was stored and made available in multiple places, it either spreads their attack thinner and thus less effective, or requires them to dedicate even more resources to conduct the attack. It gets even more difficult when data is hosted on larger providers with more bandwidth that can withstand such attacks.

But, how hard is it to get the data in more places? It isn’t, it just takes a little effort and often just as much or less than many sophisticated leakers have taken to get a web site ready. To keep control over the leaked data and potentially have a greater chance it remains available, sophisticated leakers set up a redundant mirror of the site and data. This is often done via an Internet Service Provider (ISP) in a different geolocation. If one is in the United States, the other backup is created in e.g. Europe, South America, or Australia. By doing this, and equally important, they choose a hosting provider that will not remove the data the first time a threatening email is received, making it more resilient.

Once the data is set up, there are a handful of big providers that help protect against DDoS attacks by hosting your data on their network or routing traffic through them to get to you. Most are commercial like Akamai but there is a well-known popular one called CloudFlare, or ClownFlare as I like to call them for other reasons. The free plan can be hit-or-miss and may not offer much in the way of protection, but free is free.

Where else does that data sometimes live, even if temporarily, yet long enough for the types of professions listed above to examine the data for their research? We’ll start with an obvious one, the Wayback Machine at archive.org which attempts to make a mirror of as many web sites as possible, and to do so more than once to document the changes. That is a noble effort given the incredible volume of sites out there, but they do their best. Alternatively sophisticated leakers sometimes trigger it to save their page if it isn’t archived already. But, the Internet Archive is often besieged by DDoS attacks and connectivity issues of their own. In addition, they also have their own lawyers and typically won’t immediately fold should they get a nasty letter.

Some other sophisticated leakers use GitHub / Gist which allows account creation and immediate hosting of their content, and a serious amount of bandwidth that makes a DDoS attack extremely difficult. It’s important to note that GitHub has a quick response to complaints. While they seem to be fair in handling them, they are likely to lean toward their own safety rather than a random free account on their service and remove material that violates their terms of service.

Another service that is sometimes used by leakers is Pastebin.com, run by a non-American, favored by those that fear the United States government. Appealing attributes to many different people are that the site can be used freely, has a simple interface, and high connectivity. Similar to Wayback, there is also Archive.today which allows anyone to trigger an archive of content as well, which has hosting in more than one country.

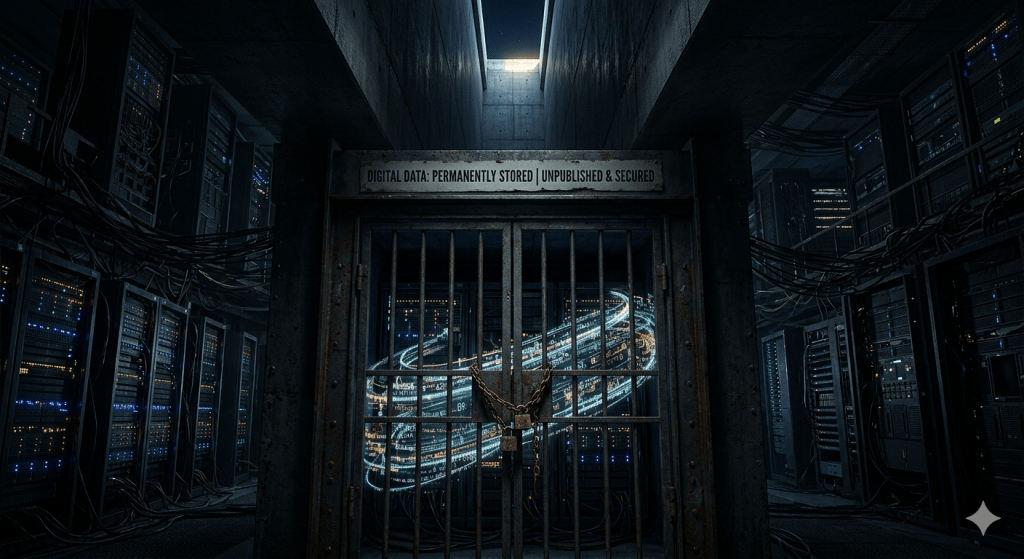

Swapping to a different format, and one that is extremely resilient to DDoS attacks, is when sophisticated leakers have in some cases put the data on a blockchain. Most people know the most iconic use of this technology as Bitcoin (BTC) and other forms of digital currency. However, that is the tip of the iceberg for the varied and potential uses of the technology. The critical part is that it is completely decentralized so the data lives on many, many computers around the world. The bigger the blockchain and the more users, the more computers that potentially store that data. So attempts to attack it become extremely difficult, bordering on impossible. Flooding a young kid in Florida or a currency trader in Minsk doesn’t successfully suppress the leaked data.

To my knowledge, there has not been a data leak that utilized all of the forms of resiliency covered. To do that requires a considerable amount of time and cost which generally is not the modus operandi of data leakers, even sophisticated ones.

For Skinner’s ICE data leak, the Wayback had repeated failures attempting to take a copy, throwing 503 errors. No other redundant sites were apparently set up. If the journalist was provided a copy of the data they kept it to themselves, which is very typical as their job is to report on the leak, not leak the data themselves. The original criminals that took the data did not leak it to others apparently. Thus, all of the data eggs were in that one server / basket, and it was near impossible to determine the scope and severity of the leak. Even as of today you can see that the Wayback still hasn’t been successful in obtaining a mirror of the site.

Conclusion

I’m not going to dig up a bunch of citations for this next bit, but trust me based on my history and ‘credentials’ when it comes to tracking and archiving data leaks. This is a common pattern for data leaks, especially high profile ones where there is a vested interest and capability by the targeted party to keep the data from seeing the light of day. This series of events often stems from (h)activists wanting to score “cool political / media points” rather than focus on seeing the task through.